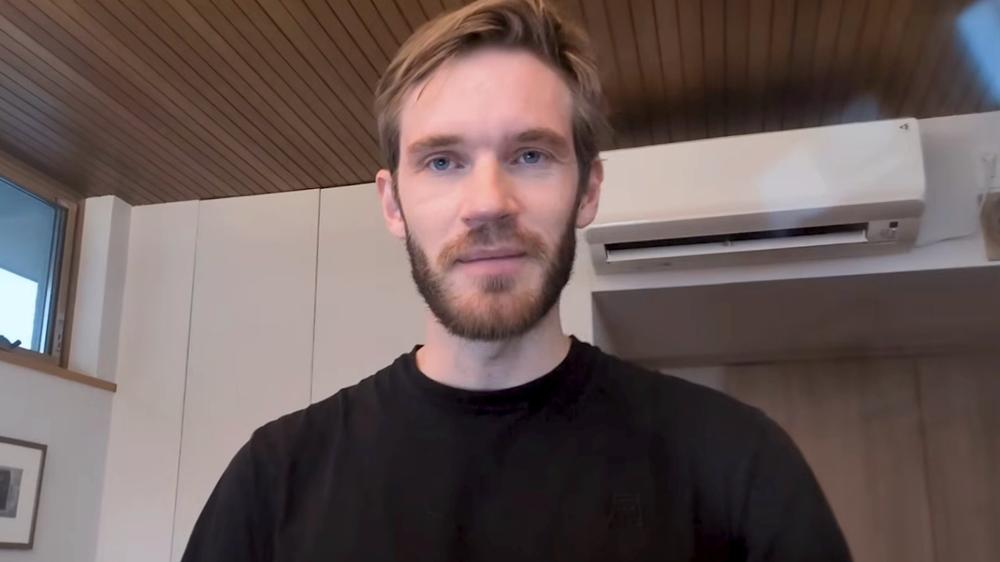

PewDiePie has revealed that he trained his own AI model and claims it outperformed ChatGPT on a coding benchmark, documenting the process in a new video that details months of testing, failures, and hardware issues.

The creator explained that the project started as a personal learning challenge rather than an attempt to build a model from scratch. He clarified that he did not create a new AI from scratch, but instead fine-tuned an existing large language model using custom datasets and coding benchmarks.

According to PewDiePie, the goal was to improve coding performance in a specific format used by AI coding agents. He said his model initially performed poorly, scoring below top models before gradually improving after multiple rounds of retraining and dataset changes.

Benchmark claims and training process

In the video, PewDiePie said he used a coding-focused benchmark to test performance and compared results against models including DeepSeek, Meta’s Llama, and ChatGPT.

He explained that his model started around 8% on the benchmark before reaching 16% after format adjustments. The creator later introduced reasoning data and additional fine-tuning, claiming one run eventually hit 19.6%, which he said briefly surpassed ChatGPT’s score at the time.

However, he later discovered benchmark contamination in his dataset, meaning some training data may have overlapped with benchmark questions. He said this invalidated the result and forced him to retrain the model.

After retraining on a coding-specific version of the base model, PewDiePie claimed the score rose again, eventually reaching 36% after fixing benchmark issues, and later 39.1% following post-training adjustments.

The video also detailed several technical problems during development, including system crashes, overheating, and hardware failures. PewDiePie said one GPU failed during training and that power cables and electrical limits became an ongoing issue due to the high computational load.

He described the setup as heavily modified and said he had to repeatedly rebuild parts of the system to keep training running.

Despite the setbacks, he said the project helped him learn more about machine learning workflows, data preparation, and model training.

While presenting the benchmark results, PewDiePie acknowledged limitations, noting that strong performance on a single benchmark does not guarantee broader improvements. He said he still plans to test the model on additional coding benchmarks before deciding whether to release it publicly.

He also noted that newer models have since appeared, including Qwen 3, which he said scores higher on the same benchmark, meaning further work would be needed to stay competitive.

The video ends with PewDiePie saying the project was primarily about learning through experimentation and failure, adding that he may continue developing the model or move on to a new project in the future

‘Amazon, Don’t Be Sorry. Be Better.’ – God of War Fans Aren’t Impressed by Live-Action Kratos and Atreus

‘Amazon, Don’t Be Sorry. Be Better.’ – God of War Fans Aren’t Impressed by Live-Action Kratos and Atreus